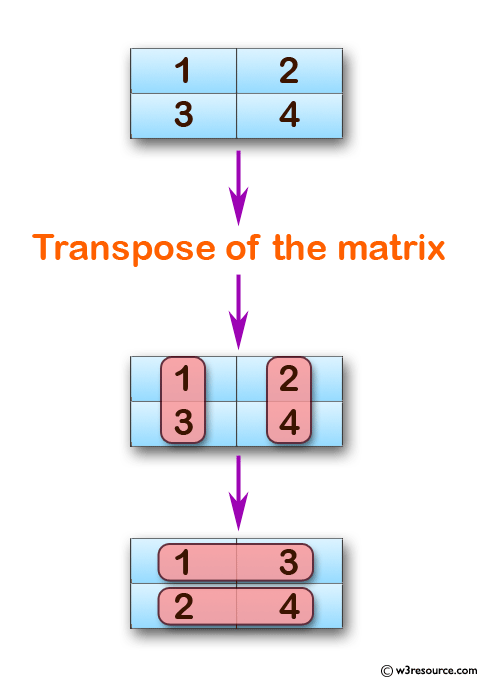

Take its transpose and then just take that matrix and take Me just write it once over here- v dot w is the same thingĪs the transpose of v, v transpose times w as justĪ matrix-matrix product. Things are equivalent to- v dot w is the equivalent of- let That v dot w, which is the same thing as w dot v, these

Just, I could write v2 there- plus all the way to vn, wn.

Let me just do it- 1 byġ matrix like that. We could write it is as justĪ 1 by 1 matrix like that. V2, w2- it's only going to have one entry. W1- let me write it like this- v1, w1 plus To look like? It's going to equal to v1 times Here, the first one I have isĪ 1 by n matrix- I have one row and n columns. Matrices- this is w right here- if I viewed these justĪs matrices, is this matrix-matrix product To vn- so this is v transpose, that's v transpose-Īnd I take the product of that with w. Transpose of v- let me write it this way- what is- if I didĪ matrix multiplication, so I did v1, v2, all the way Maybe the transpose of v? Well, we could take the Of the dot product of two-column vectors. V2, w2, and you just keep going all the way to vn, wn. v dot w is equal to what? It is equal to v1 times w1, plus We're already, I think, reasonably familiar with. Vector here that's w, and it's also a member of Rn. Of what happens when you operate this vector, or you take Refer to the transpose of the transpose and then do some These operations around column vectors, so you could always Some ways, a better way to do it because we've defined all Them the transpose of column vectors, just like that. I had those row vectors, and I could have just called Vectors, a1 transpose, a2 transpose, all the wayĭown to an transpose. Those matrix, we called them the transpose of some column And you might remember, we'veĪlready touched on this in a lot of matrices before. It be equal to v1, v2, all the way to vn. Matrix when you take the transpose of it. Then what are we going to get? We're going to have a 1 by n Look like? Well if you think of this as a nīy 1 matrix, which it is, it has n rows and one column. Why we can't take the transpose of a vector, or aĬolumn vector in this case. We've taken transposes of matrices, there's no reason I've touched on the idea before,īut now that we've seen what a transpose is, and =, and so whether we send x to y's home(Rm) via A, or send y to x's home(Rn) via At, we end up with two vectors, which are "equally aligned" in either case. In summary, A: Rn -> Rm, At: Rm -> Rn, x is in Rn, y is in Rm. That these "measures of alignment" are equal is the useful bit I imagine will be leveraged in the discussions to come. And in like fashion the RHS is a measure of of how "in line" the image of y under At is with x, or how "in line" the product At*y is with x. So the LHS of the equality is a measure of how "in line" the image of x under A is with y, or how "in line" the product A*x is with y. could be viewed as a way of measuring how "in line" the vectors u and v are with each other. A is a linear transformation from Rn to Rm, and At is from Rm to Rn. One way to view this is in terms of transformations between Rn and Rm. Just to be clear these are dot products here in this identity, which to be clear I will rewrite here and onward as =, where is the dot product of u and v, and At is the transpose of A.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed